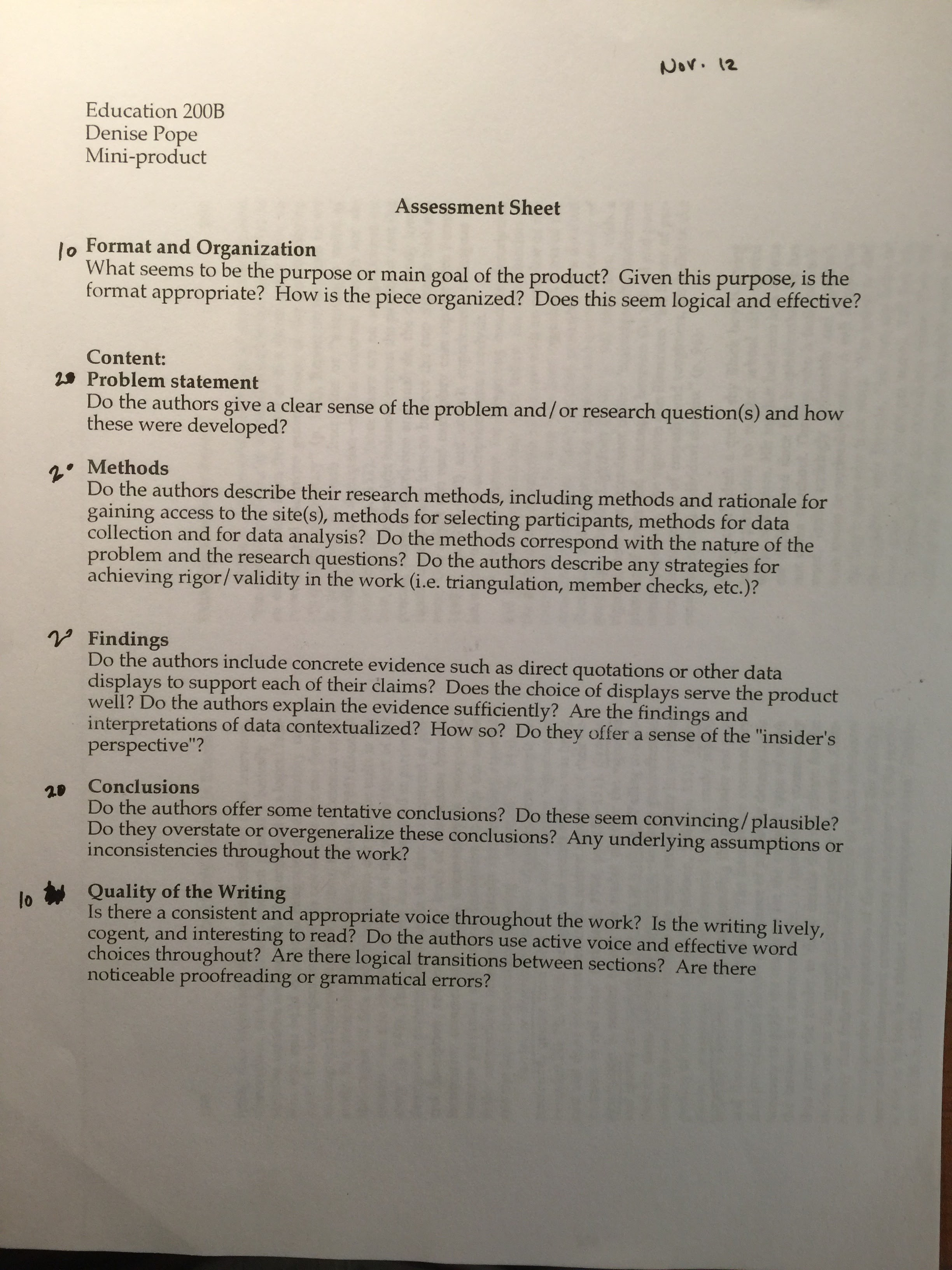

ABSTRACT

In this qualitative study, individuals involved with the Learning Innovation Hub (iHub) were studied to address the research question, “How does iHub facilitate collaboration between educators and entrepreneurs to promote education technology innovation and adoption?” To this end, an observation of the iHub fall 2015 orientation and two interviews with iHub Manager Anita Lin were conducted over the course of three weeks. iHub was found to facilitate collaboration between teachers and startups by seeing teachers as key agents in edtech adoption and focusing on teacher needs. iHub, in turn, does not focus on other stakeholders in the education ecosystem beyond teachers. This raises concerns about iHub’s impact on outcomes for learners.

(Keywords: education technology; edtech innovation; edtech adoption; iHub)

1 INTRODUCTION

Technology has the potential to revolutionize the ways in which we teach and learn. In recent years, a surge of education technologies has pushed more products into the hands of educators and learners than ever before. In fact, investments in edtech companies, too, have skyrocketed; during just the first half of 2015, investments totaled more than $2.5 billion, markedly surpassing the $2.4 billion and $600 million invested in 2014 and 2010, respectively (Appendix A) (Adkins, 2015, p. 4). In the 2012-13 academic year, the edtech market represented a share of $8.38 billion, up from $7.9 billion the previous year (Richards and Stebbins, 2015, p. 7). But how do educators find the education technologies that actually improve learning outcomes in a space increasingly crowded with many players and products?

The Learning Innovation Hub (iHub) is a San-Jose-based initiative of the Silicon Valley Education Foundation (SVEF) in partnership with NewSchools Venture Fund. Funded by the Bill & Melinda Gates Foundation, iHub aims to provide an avenue “where teachers and entrepreneurs meet.” iHub seeks to develop an “effective method for testing and iterating the education community’s most promising technology tools.” (iHub website).

To this end, iHub coordinates pilot programs of edtech products in real school settings. The iHub model involves:

(1) recruiting early-stage edtech startups with in-classroom products to apply to the program,

(2) inviting shortlisted companies to pitch before a panel of judges,

(3) selecting participating startups,

(4) matching startups with a group of about four educators who will deploy products in their classrooms,

(5) jointly orienting educators and entrepreneurs prior to the adoption of the technology in the classroom, and

(6) guiding communication among participants throughout the pilot and feedback phase.

iHub plays a unique role in the edtech ecosystem of Silicon Valley given its position as a not-for-profit program that does not have a financial stake in the startups. As such, we are interested in better understanding iHub’s impact on improving learning outcomes through technology. This study seeks to address the following research question:

How does iHub facilitate collaboration between educators and entrepreneurs to promote education technology innovation and adoption?

2 METHODOLOGY

We followed a prescribed sequence from framing our research question through data collection and analysis. Although we did not conduct a formal literature review on the research topic, members of the research team began the project with prior experience of education technology use and adoption in the classroom. We also conducted an informal observation of the organization prior to the official start of the project; we attended the iHub Pitch Games, during which the startups were selected for the participation in the fourth cohort. Our subject was selected based on a combination of convenience sampling and alignment of interest in the subject within our team.

2.1 Data Collection

We used three primary sources of data collection: online documentation, an observation, and interviews. This source triangulation roots the reliability of our findings and affords us various insights into the native view in order to understand iHub’s strategies for facilitating collaboration between educators and entrepreneurs.

We began our official data collection through the iHub website, which lays out the overarching priorities of the iHub program. The website afforded us a preliminary understanding what the program does, which we continued to access throughout the duration of the study. With this written information, we were able to compare what the program claims to do to what the program actually does, as demonstrated in the observation and what the program says it does, as elucidated by the interviews.

We continued our data collection by conducting a one-hour observation of iHub’s fall orientation (Appendix B). The orientation represents the first in-person point of contact between participating educators and entrepreneurs of the fourth iHub cohort. Coordinated by SVEF staff and spearheaded by Lin, it served as the ideal occasion for observation, as it showcased iHub’s role as a facilitator of communication and collaboration between educators and entrepreneurs. Uniting everyone together in the same room, the orientation dealt with everything from high-level discussions of the goals of iHub down to the administrative details of the initiative. Both raw and amended notes were kept by all three researchers.

In the two weeks following the orientation observation, we conducted two one-hour-long interviews of Lin. Lin was selected as the ideal interview subject given her accessibility as gatekeeper to the research team, her position as iHub Manager, and her deep understanding of the iHub initiative. A peer-reviewed interview guide was used in both interviews, though interviewers let questions emerge in situ as appropriate. The first interview sought to garner an understanding of the overarching goals and priorities of both SVEF as the parent organization and iHub as the specific program of interest. We explored what the organization does, what their processes look like, and Lin’s role within iHub. While the interview uncovered some of the areas for deeper discussion, we were intentional in keeping inquiries of the first interview at an introductory level and saving probes for the second interview. The second interview, in turn, honed in on a more granular discussion of iHub’s role in the technology adoption process and learning outcomes. Both interviews were voice-recorded, and approximately thirty minutes of each interview were transcribed (Appendix C).

2.2 Data Analysis

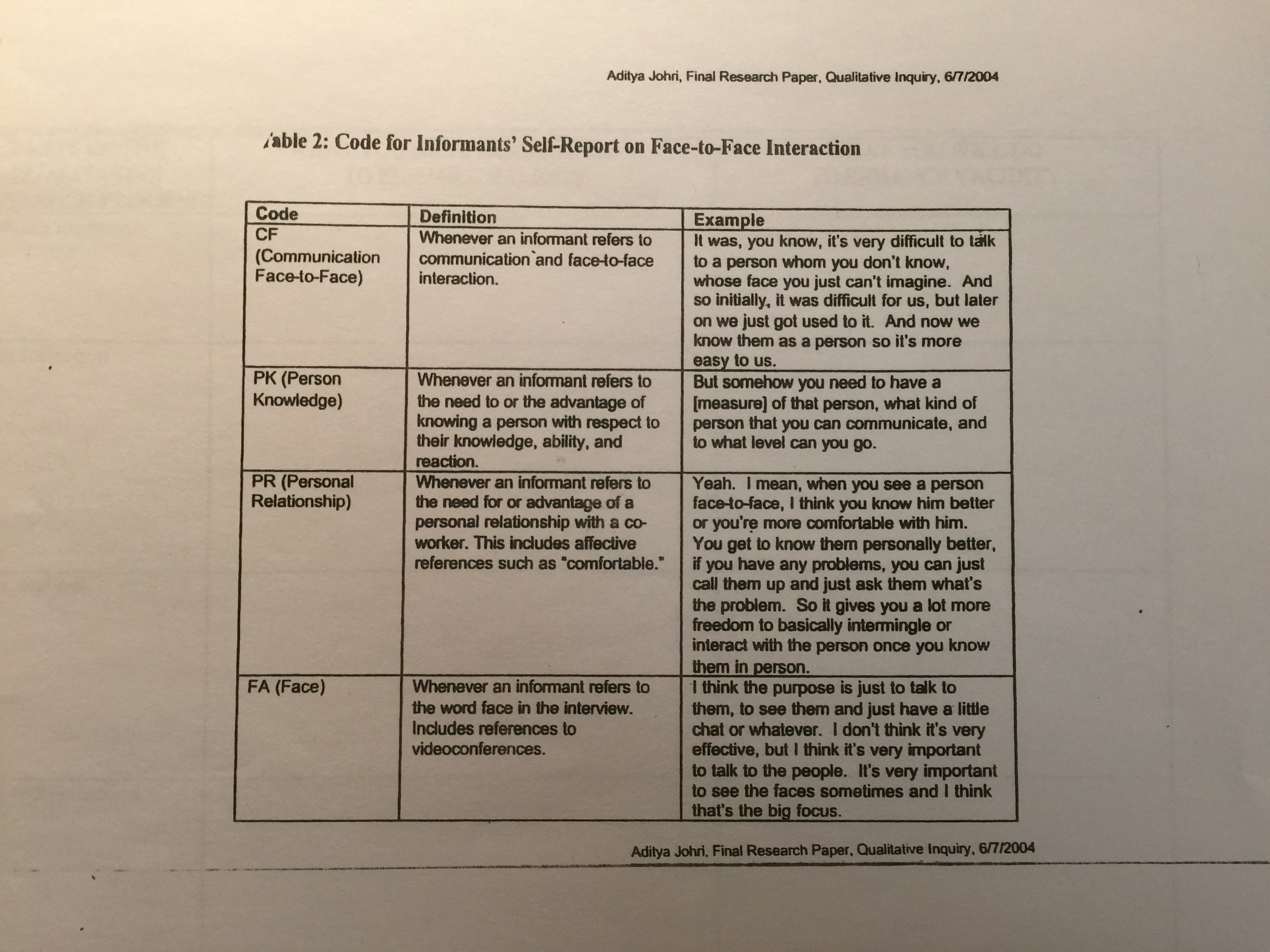

Our data analysis process went hand-in-hand with our data collection process, allowing us to make adjustments of our concepts, themes, and categories throughout our research. While we did not create memos per se, individual research descriptions and reflections served to clarify and elucidate some of the themes and insights that emerged throughout the process. Raw and amended notes and interview transcriptions were coded with the following jointly designed list of codes:

- Educator feedback

- Entrepreneur feedback

- Examples of success

- Examples of challenges

- Focus on early-stage startups

- Focus on educators

- Funding partnerships

- Metrics for success

- Neutrality

- Opportunities for improvement

- Organizational design

- Stakeholder alignment

- Tension between decision makers

- The iHub model/framework

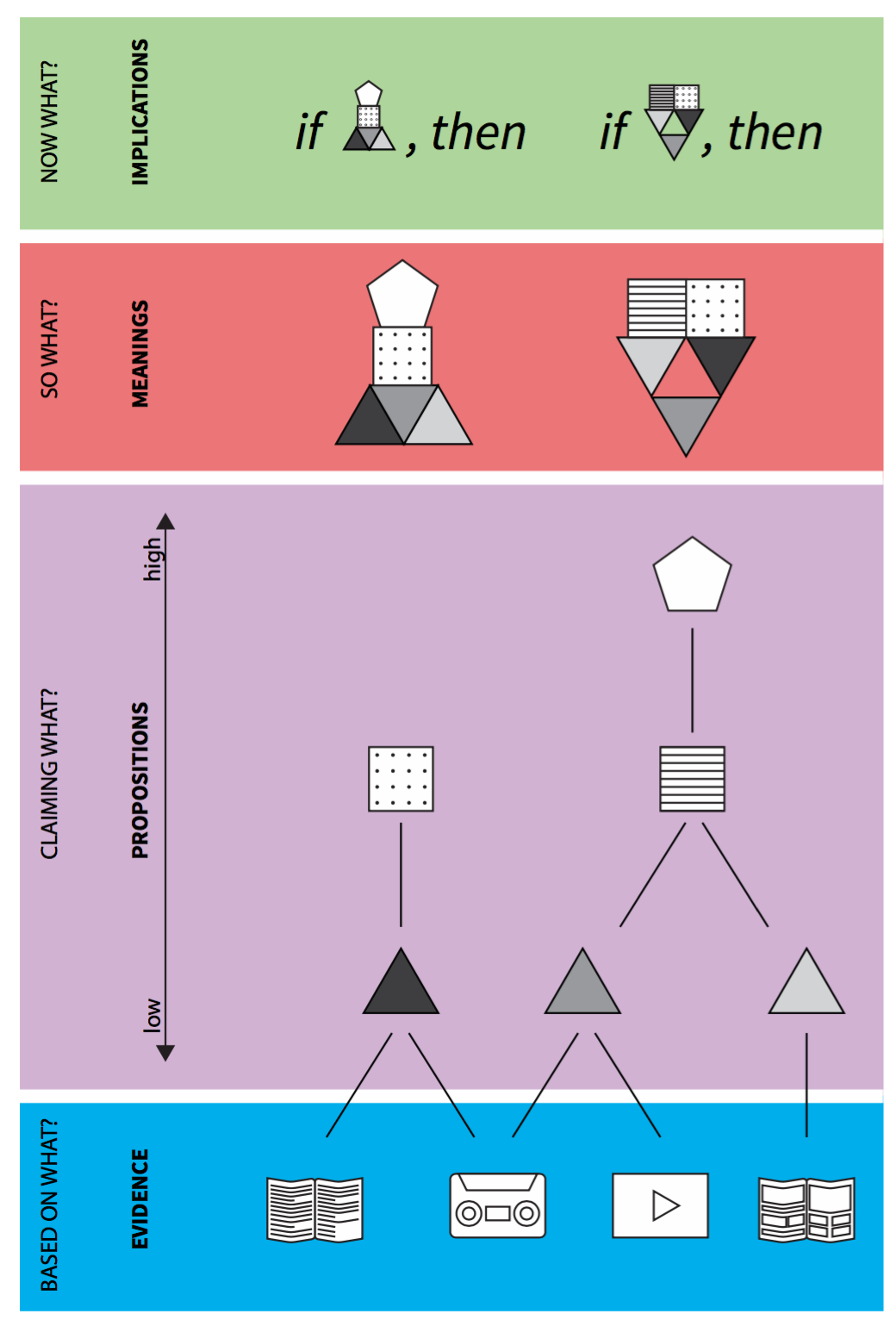

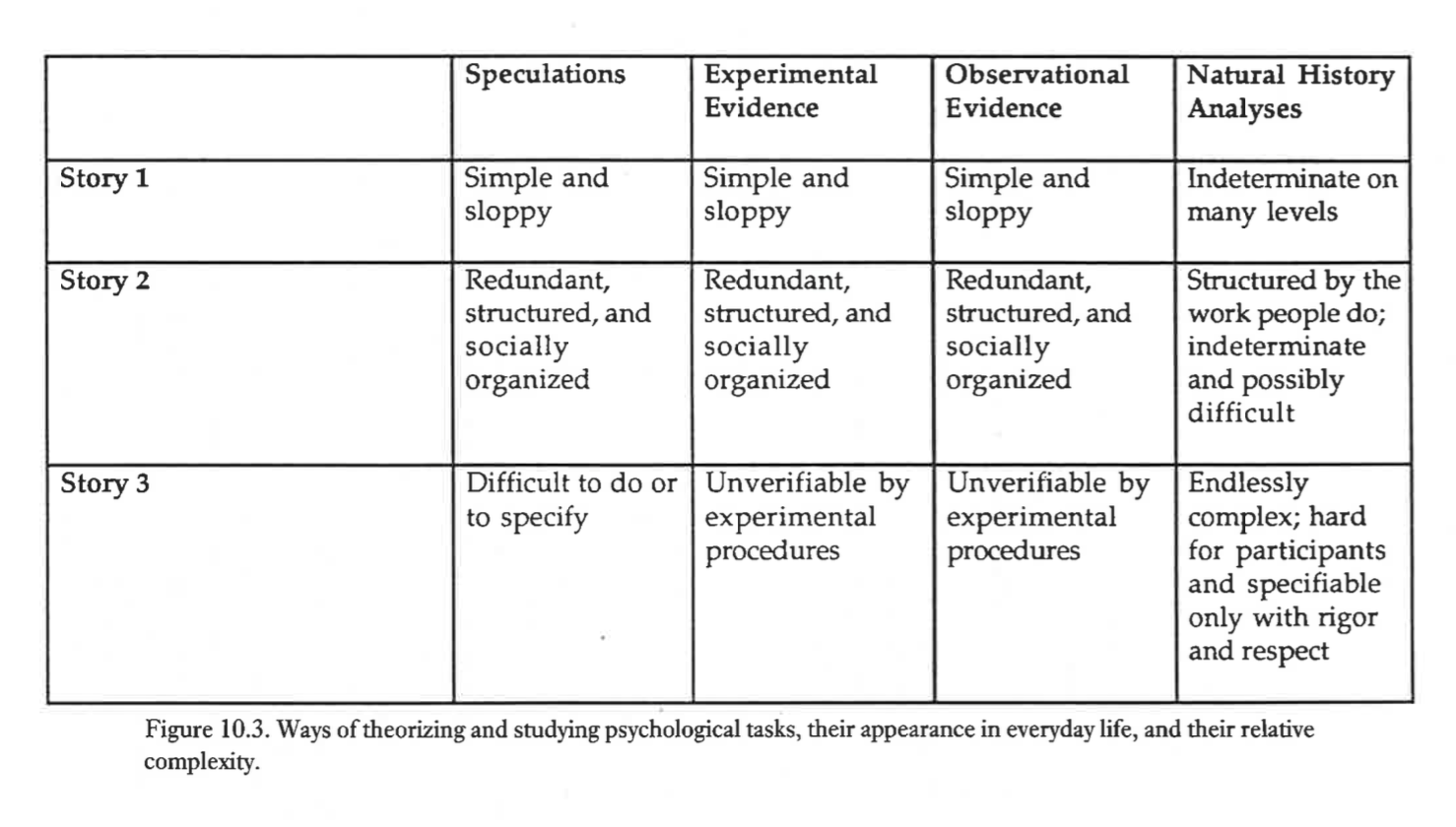

From there, we were able to identify themes and patterns in our data. As our research question seeks to understand a phenomenon, a grounded theory approach proved most appropriate. This grounded theory method, we derived the propositions described below.

3 FINDINGS

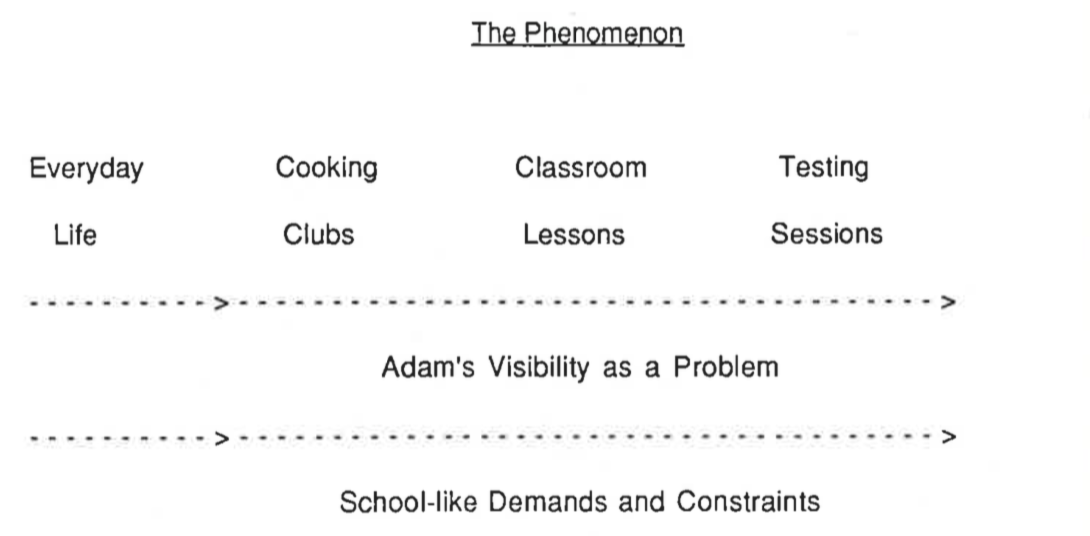

3.1 iHub sees teachers as key agents in edtech adoption.

iHub sees teachers as key agents in edtech adoption. While the organization understands that entrepreneurs, school principals, district managers, and policy makers are all stakeholders in this process, they view teachers are the strongest drivers:

What we have heard from teachers and from districts, is that a lot of times for a school for adopt or…use a product across their school, it’s because a group of teachers have started of saying “I’ve been using this product. I really like this product. Hey, like friend over there! Please use this product with me,” and they are like, “Oh! Yeah we like it,” and kind of builds momentum that way (Interview Transcripts, 2015).

Under this notion that teachers can be strong advocates of edtech products, iHub is looking to adjust its curriculum around teachers as the key agents:

“So we kind of have been thinking about how do we build capacity of teachers to advocate for products they think are working well” (Interview Transcripts, 2015).

They also initiate their pilot cycles with the teachers defining what their current needs are, prior to selecting the entrepreneurs that are going to participate:

“So we send out to our teachers and they’ve kind of, I would say vaguely, have defined the problem of need, and we’d like to kind of like focus them on the future.”

Innovation then, is driven by what the teachers need in the classroom. These teachers are hand picked based on their proficiency in adopting technology and likelihood of giving better feedback and needs statements:

I think teachers who we pick, we try to pick ones who are very…very experienced with using tech in the classroom and so I think that you, you find that teachers who use tech in the classroom, you…it’s like their instruction is different (Interview Transcripts, 2015).

Going further, iHub wants to promote a community of practice to enable discussion and scaffolding amongst teachers open to edtech adoption:

And so I think our program is also to help teachers who are early adopters of technology, help them kind of meet other teachers at different school for early adopters, and build a cohort that understands that and kind of can refer to each other (Interview Transcripts, 2015).

Finally, when looking at entrepreneurs, iHub sees the teachers as the key agents to their success:

So I think that that’s why we’re working with early stage companies because I think it’s, it’s possible to find one now that meets the needs of many teachers and kind of help it kind of just move along (Interview Transcripts, 2015).

3.2 iHub has a focus on teacher needs.

iHub’s belief that teachers are key agents in edtech adoption leads it to focus on teacher needs. Many of iHub’s processes and resources revolve around satisfying the needs and constraints the teachers might have in the process. For instance, the orientation – albeit an event bringing together all participating stakeholders – was framed around the needs of teachers. Rhetoric revolved around “how do we choose the technology we use in the classroom?” (Observation Amended Notes, 2015).

The focus on teacher needs also became evident during our interviews with Lin. In fact, the program’s existence is rooted in the perceived needs of teachers:

Schools DO need edtech products…They want products that do x, y, z. But they don’t really know how to go about and find them. So I think that that’s why we’re working with early stage companies because I think it’s, it’s possible to find one now that meets the needs of many teachers and help it just move along (Interview Transcripts, 2015)

Lin explicitly described one of iHub’s main goals as:

To help teachers who are early adopters of technology, help them kind of meet other teachers at different school for early adopters, and build a cohort that understands that and kind of can refer to each other…we kind of want to help teachers understand how to use it—edtech—in their classroom (Interview Transcripts, 2015).

Furthermore, Lin acknowledged that iHub gives teachers various opportunities to communicate their needs:

There are lots. Every time we have meetings, we are very…open about that. And I also think, we have surveys, so there’s a lot of, we send out a lot of surveys about a lot of…different, specific, different…happenings, and so after…orientation happened, there was a survey that was sent out about that. After the Pitch Games happened, there was a survey about that…I also think that during the rounds if we have strong relationships with teachers, which is typically the case, then teachers are very open with us. At the end, I’ll be like, you know, we’d love to hear your feedback, and they’ll just tell us, you know, we’d love it if there was this, this, this, this (Interview Transcription, 2015).

Lin also shared two instances in which feedback from teachers was implemented to improve this focus on teacher needs. In the first, Lin pointed out:

We’ve made a lot of those changes based on teacher feedback. Like for example, the reason why teacher teams are at a school site this year instead of from all different schools is partly because it makes sense, I think, to scale, but also because that was one of the big pieces of feedback that was given from the beginning (Interview Transcripts, 2015).

In the second, Lin highlighted a major change in the mechanics of the pilot program where instead of having one teacher per school, they are now working with teams of teachers from each school. This change was based on teacher feedback that wanted more collaboration amongst themselves:

Yeah, so I think for our teachers we would like them to meet up kind of weekly. And when you’re not at the same school it’s a lot harder to meet on a weekly because maybe one night one school has their staff meeting and then the other night the other school has their staff meeting and then, you know, I think it was a lot of commitment to ask and I think a lot of teams found it really challenging and maybe would not always be there because of that (Interview Transcripts, 2015)

Additionally, in her description of the structure of the program, Lin conceded that the number of companies accepted into the program is contingent on the availability of teachers: “For us its capacity of teachers…In our last round we supported 25 teachers. And this round we have about 13 teams of teachers” (Interview Transcripts, 2015). In fact, the single metric for success of the iHub program that Lin identified when prompted was the number of teachers using the iHub model (Interview Transcripts, 2015).

In the discussion on iHub’s priorities, Lin also focused on outcomes for teachers.

3.3 iHub does not focus on the needs of other stakeholders beyond teachers.

Noticeably, we found no evidence that iHub is focusing on the needs of any other stakeholder group beyond teachers. At the foundation of this proposition lies the evidence supporting the previous proposition that iHub is focused on teacher needs; in other words, if iHub is focused on teacher needs, it is by default not focused on the needs of other stakeholders. The following evidence further proves that iHub does not focus on the needs of other stakeholders.

When asked directly what the ideal relationship between schools and startups would be, Lin responded, “An edtech vendor is a provider, right? So they should be providing some service that fits a need that a school has or a teacher has or a student has in some way” (Interview Transcripts, 2015). She added, “They’re still…an early stage company so they’re…still growing and figuring out exactly what it looks like.” (Interview Transcripts, 2015).

When asked about the school’s reciprocal responsibility to startups, Lin responded, “I don’t know, I never thought about that, it, as much that way” (Interview Transcripts, 2015). iHub does not have any expectations for what a teacher should contribute to the relationship.

iHub acknowledges that communication problems have arisen when the startup’s focus is diverted from the pilot program, “And so they became pretty unresponsive with our teachers. The teachers like, emailing me, and I’m like trying to get in contact with it.” However, iHub does not have a process for holding startups accountable, “And so typically when there’s not communication between these parties…the pilot would not be as successful as it could because they weren’t communicating” (Interview Transcripts, 2015)” iHub does not have the relationship with startups to communicate in order to address an issue such as this.

Lin even identified for improvement in iHub’s relationship to stakeholders such as entrepreneurs and school districts:

And so how do we support districts where maybe they’re not as on top of edtech, how do we support their administration so that they understand the role of it, understand maybe how select it, and understand how teachers use it so they can provide the support both maybe in resources but also in professional development to their teachers (Interview Transcripts, 2015).

But on a broader scale, iHub demonstrated shallow knowledge of the intricacies of the larger education system:

I would say I don’t know enough about school districts and about school…counties, offices, to be able to know whether or not they’re functional. There’s a lot of bureaucracy, I think, that comes up when you work with the county and work with…there’s so many different needs and so many different people kind of working on it that sometimes…they can’t, they’re unable to kind of do certain actions because of different reasons, whatever they are. So I don’t know (Interview Transcripts, 2015).

And finally – there is rare evidence of any kind of direct preoccupation about learning objectives and student satisfaction. The few moments that students were mentioned follow:

I talked about how it helps students but really a big point, I think a big selling point for districts is that it helps teachers, we give a lot of teacher professional development during that time. (Interview Transcripts, 2015)

Yeah, so I think we just want to make sure the, the products are really relevant to students, right. And so, that’s the way we do it, is that you get feedback from students and teachers, but I think those needs change, right? Each year, this year, the needs are different than last year, because this year you’re using Common Core, and last year, maybe, it wasn’t as big of a, actually it was really big last year. (Interview Transcripts, 2015)

Only when prompted for a specific success story did Lin share the story of one student with some light motor disabilities who learned from the entrepreneur’s product:

I think also a lot of the other teachers who worked with that product really, their students really enjoyed it, because it is really engaging and they were making like, connections between the fact that, you know, I’m doing math… And I think that was a like a really wonderful example of a product that went really well (Interview Transcripts, 2015).

4. LIMITATIONS

In order to have had a better sense of what edtech adoption really looks like within iHub’s process, we would have to have done several further observations. We were able to see the process in its very early stages but feel that observing the classroom setting and feedback meetings would reveal more interesting data.

What we observed was also not as relevant to the overall research question as were the interviews. What we saw was the very first meeting between teachers and startups. We observed the initial questions and doubts about the use of the product, yet no teacher had played with it yet. It would have been interesting to observe the product already running in the classroom.

Our limited previous knowledge of what the company did, what we were observing and their overall goal also reduced our capacity to probe deeper into the subject matter. We might have chosen to interview a teacher or a startup instead of the manager of the program, for instance.

5 CONCLUSION

iHub views teachers as key players to edtech adoption given their position to advocate for technology products among their colleagues and other stakeholders within the system, and this view of teachers has led them to focus on teacher needs. Our evidence demonstrates that iHub’s goals, its active pursuit of teacher feedback, the changes implemented within the program, and the program’s metrics for success all point to this focus on teacher needs. Insofar as iHub is focusing on teachers’ needs, it can no longer prioritize the other stakeholders in the education ecosystem. In fact, our evidence shows that this has led to a weak relationship with other stakeholders including entrepreneurs, districts and learners.

5.1 Implications

Our conclusion raises concerns with the ethics of iHub’s facilitation of edtech adoption. In optimizing to meet the needs of teachers, iHub is not focused on optimizing for learner outcomes. In our evidence, there is less priority given to students, learning objectives, and teaching pedagogy. iHub is trusting that teachers operate with the best interests of their learners in mind, but we cannot be certain that this is always the case. We do not actually know the real implications of the iHub program on learning outcomes, but no research is being done to understand whether there is benefit or detriment to students.

REFERENCES

Adkins, S. (2015). “Q1-Q3 International Learning Technology Investment Patterns,” Ambient Insights. http://www.ambientinsight.com/Resources/Documents/ AmbientInsight_H1_2015_Global_Learning_Technology_Investment_Patterns.pdf

Learning Innovation Hub website. Retrieved on 2015, December 7 from http://www.learninginnovationhub.com/

Richards, J. & Stebbins, L. (2015). “Behind the Data: Online Course Market–A PreK-12 U.S. Education Technology Market Report 2015,” Education Technology Industry Network of the Software & Information Industry Association. Washington DC: Software & Information Industry Association

APPENDIX A: Investments Chart

Source: Adkins, S. (2015). “Q1-Q3 International Learning Technology Investment Patterns,” Ambient Insights. http://www.ambientinsight.com/Resources/Documents/AmbientInsight_H1_2015_Global_Learning_Technology_Investment_Patterns.pdf

APPENDIX B: Orientation Observation

APPENDIX C: Interview Transcripts

Interviewee: Anita Lin, iHub Manager at Silicon Valley Education Foundation (SVEF)

Interview 1: Oct 29 2015

Audio file: download

Interview 2: Nov 5, 2015

Audio file: download

13:49:00

[Ana] What would you say, um, the goals specifically of innovation is, the innovation group within SVEF is?

[Anita] i think the goal is to find…find innovative things that are happening in education and help support their growth. That’s what I think the innovation side is focused on.

[Ana] Ok. So you pointed to the three different stakeholder groups that are kind of important as you’re going towards your mission, which are students, teachers, and, um, districts [yeah], um. But you said that they’re not always aligned. And so how does, in the work that you’re doing bringing the different stakeholders, and adding even a fourth stakeholder to that, how do you try to align those different groups?

14:53:01

[Anita] Yeah, that’s a good question…We, when I think about it, what I mean is mostly that when you find ed tech companies and you recruit them, some ed tech companies are focused on students and the classroom experience; some ed tech companies are focused on the school experience, or like maybe making life easier for teachers, which I would consider different than a product that…instructs students for math; and then some products, right, are learning management systems, and those are for your district. You want to be able to use them across the district so that all the information is centralized. And so those, so when, so depending on the person who’s looking at a product and their position in that whole spectrum – a student, a teacher, a principal even, an instructional coach, or like someone in the district – the way they look at a product is different. That’s what I meant by that. [mhm] So, does that answer your question=

[Ana] Yeah, yeah, yeah absolutely. And how, could you speak to the challenges of, like, actually aligning [yeah] those groups.

[Anita] Yeah. I think that’s always a tension that happens in education, not just…within our work, but as a whole…sometimes, for example, here’s an example that I ran into last weekend. Someone was telling me, they worked with one of our companies previously. But…what happens in their district is very, I think they’re very on top of the policies basically, and so they approve certain, certain companies for use in the classroom because they meet all the privacy laws and all those…all these requirements they set, so I think privacy laws and more. And so because for some reason the district didn’t approve this one product she had been using before, and so this year she can’t use it. And so, right, to her, she, the way this teacher sees it is like, well, like my students really want to do it, I really want to do it, why can’t I just do this? But then the district sees it as like, you know, we have a process. This didn’t fit our criteria for some reason or the other. And so therefore, we don’t allow it. Right? And so then there’s that tension, and I think we’re still figuring out how you solve that. [yeah] I think it’s, I think it’s a tension for anything, ’cause even curriculum, that happens in curriculum…textbook curriculum adoption. So, [yeah] yeah.

17:17:05

[Ana] So do you see a role for SVEF, in that specific situation, to facilitate [yeah] alignment?

[Anita] So we kind of have been thinking about how do we build capacity of teachers to advocate for products they think are working well. We also have been thinking about how we support districts in understanding ed tech. So if a district, right, we know that some districts are really on top of ed tech in Silicon Valley and some are not. [mhm] And so how do we support districts where maybe they’re not as on top of ed tech, how do we support their administration so that they understand the role of it, understand maybe how select it, and understand how teachers use it so they can provide the support both maybe in resources but also in professional development to their teachers.

[Ana] Can you give a specific example?

[Anita] Yeah, so we haven’t done this yet exactly, which is why maybe I don’t have a great example, but in the spring, we’re thinking about how do we build capacity of the district. And so we are thinking about convening some…instructional tech directors in a meeting and having them kind of talk about challenges they faced or things they’ve done really well in implementing education technology in the classroom. And so some work that supports this (inaud), which I mentioned earlier, is that we do these ed tech assessments where we go to different school districts and…walk them through an ed tech assessment from hardware all the way to software. So do you have enough access points? To do you provide training for your teachers when you do Google Classroom or whatever product they’re using. So we kind of want to use that to support our teachers.

18:55:02

[Ana] Yeah. Do you have a, (three-second pause) I guess, (three-second pause) where would you be, what point in the process are you in this now, if you’re thinking about it for the spring?

[Anita] So we have been, that’s a good question, so we’ve, we’ve done a couple ed tech assessments in the area that we’re kind of targeting right now so the East Side Alliance area…and we are targeting the last week of July as like, sorry not the last week of July, January, as…this director get-together…so I think we’ll kind of get an aggregate report from that data and then run some sort of roundtable with these directors. So that’s kind of what we, we have the idea, we kind of have an idea of when it would be. We are working with Price Waterhouse Coopers, PWC, with, to implement this work. And so they’re creating a project plan currently. And so then we’ll kind of partner with them to execute on that.

20:03:00

26:40:00

[Lucas] You’re good?

[Anita] umhum

[Lucas] Alright so… hum… you guys good… hum… so… I think, hum… we’re gonna dive into a little bit more about the model you mentioned

[Anita] Ok

[Lucas] So if you could tell me in your own words what’s the process that the startups go through with iHub prior to Pitch night?

[Anita] So we’re recruiting startups that are early stage so, what I would say we define that between Seed and Series A, hum… but I think it’s probably more broadly interpreted than that and so… We kind of reach out to contacts we have in the Bay Area and maybe a bit nationally and ask them to help us pass on the message that we are kind of looking for Ed Tech companies that are, that could be used in some classrooms specifically.

[Lucas] Ok

[Anita] From there companies apply online through a, like, a Google Forms. It’s pretty simple. It’s a very short application process, but I do think we’ll probably add to that next year. hum. And then following a certain time period I convene the invites of different people to be part of a short list committee. And so our short list committee consists of venture capitalists, it consists of accelerator partners and then also people from the education community so that typically is maybe a like an Edtech coach of the school or an IT Director at a school. Hum. Potentially some educators as well. So we kind of bring together this, this committee that, from all of our applications we f it down to 12. Then we ask those 12 to pitch other pitch game and we kind of ask them “Hey, focus on things you used in STEM classrooms” and we, we invite judges that are business judges. So typically CEOs of big companies in the area and then also education leaders. so we had [name of person] one. There’s also, hum… we’ve also had people who (whispers) trying to think of who else… (normal) we’ve had educators, we’ve also had IT Directors as well ‘cause we kind of think, you know, they’re different slices of the education world so we have both of those be there and then they pitch and then the judges ultimately select the pool of companies that we work with for the round.

[Lucas] So you mentioned there’s 12 applicants… 12 selected [uhum] and then how many go to hum, the actual orientation?

[Anita] We pick between 6 to 8 companies [6 to 8] This last round we picked 6 hum, I think we have 11 pitches so that’s probably what we have.

[Lucas] Uhum… Is there any, hum… reason for this number?

[Anita] For us its capacity of teachers [capacity of teachers] so we support, in our last round we supported 25 teachers. And this round we have ’bout 13 teams of teachers. And so we kind of didn’t wanna companies to support more than 2 or 3 although I think… we… we… we didn’t wanna it to be super challenging for companies to support and also since they are early stage products, we found that some companies as they’re taking off, like, they get really busy and they’re like, completely immersive so… I think it’s to balance both the support aspect but as well is kind of the teachers that we can support also.

[Lucas] Uhum… so let’s go a little bit back, uh, what happens between the pitch night and orientation in terms of your interactions?

[Anita] So we send out to our teachers and they’ve kind of, I would say vaguely, have defined the problem of need, and we’d like to kind of like focus them on the future. Make ’em define it a lot more clearly. Hum… but have… we send out… I send out a form that kind of says, you know, “You’ve seen these companies at the pitch round. Here’s some more information about them if you’d like”. And I ask them to preference these different companies. So like, 1, 2, 3, 4 I mean we have them preference them whether or not, they’re going to work with all of them. And so, we… then they preference them and I kind of match them typically if I can I just give them their first choice of company that they’d like to work with cause I think that (mumbles), builds a lot of investment in our process, hum… and then by orientation they know who they are working with and then we kind of tell them that all of… We’ve already told them all the program requirements before but we kind of go over them at orientation and then go over… let them meet their companies for the first time.

[Lucas] Great. And how about after orientation? What happens?

[Anita] So after the orientation we kind of let them go and set up their products for about a week or two depending on the time crunch we have from the end of the year and then… for the next couple of weeks they use the products in the classroom. There might be some observations but I would say these observations are mostly from a project management perspective more than like, an evaluative one. And then they submit feedback. And so we have some templates that we give them that we ask them to submit feedback from. There’s probably have a guiding question for each one and each week we’ll update that guiding question. Also we use a platform called Learn Trials which kind of gets qualitative feedback generally from these teachers about the product and includes comments but also has a rubric that they kind of use. And we’ve asked for pre and post assessments in the past that our teacher created ahm, but this probably hasn’t been… we have not been doing that. I think we need to find a better way to incorporate, so…

[Lucas] So, so… tell me a little bit more about this tool for the Qualitative Assessment. You said the name was?

[Anita] Learn Trials – and so they have a rubric that assesses an ed tech company across different strands whether that’s usage, whether that’s how easy was it for it to set up. And they kind of just rate them almost like grading it you know, like give it an A, give it a B. So like kind of like over time. And we ask them to submit it in different, like different… on a routine, so every 2 weeks or something. Where you’re able to kind of see how the product performs over time.

[Lucas] And am I correct to assume that after orientation the process goes towards, until the end of the semester?

[Anita] Yes – so it’s only until the end of this semester. So typically December, I want to say like 18th

[Lucas] And then what happens?

[Anita] And then at the end of this orientation we SVF maybe with the help of some of our partners like LearnTrials will aggregate some of this data and will share that out with the community. Additionally for this round something we’d like to do is maybe then from our 6 companies that we work with, work with a few of them and help them… help support implementation in the school versus just a couple classrooms that a school. So we’re still figuring this spring what that exactly looks like in terms of the implementation of the, these products but that’s something that we’d like to do.

[Lucas] And when you say community you mean both teachers, schools and the EdTech companies? You share it with everyone?

[Anita] Yeah

[Lucas] Hum… so what other events or resources you provide that have like similar goals or priorities? Or is this the only…

[Anita] Like within our organization? [yes] Well, in terms of teachers support, like, our Elevate Program I know… I talked about how it helps students but really a big point, I think a big selling point for districts is that it helps teachers, we give a lot of teacher professional development during that time. And so I think our program is also to help teachers who are early adopters of technology, help them kind of meet other teachers at different school for early adopters, and build a cohort that understands that and kind of can refer to each other. Humm… so we also do some, some I would say professional development is not as extensive as all of it is but we kind of want to help teachers understand how to use it, EdTech in their classroom. Potentially, referencing… Our goal is to reference the SAMR model. So..

[Lucas] Uhum… And is this whole process the first cycle you guys are going through, or you have been doing this for a while?

[Anita] So we started our first round in the Spring of 2014 – so this is technically round 4 but we’ve itter… like… it changes… little pieces of it change each round. So in the past when we’ve done it, when I run it, it was just I would recruit individual teachers from schools and so then I would form them onto a team so maybe a school, a teacher from school A, a teacher from school B, and a teacher from school C. And in this round I re…, we did recruitment where I recruited teacher teams. So now it’s like 3 teachers from school A, 3 teachers from school B, and then they are all using the same product at their school site so I think that helps with the piece of collaboration that was harder to come by earlier.

[Lucas] And how was it harder?

[Anita] Yeah, so I think for our teachers we would like them to meet up kind of weekly. And when you’re not at the same school it’s a lot harder to meet on a weekly because maybe one night one school has their staff meeting and then the other night the other school has their staff meeting and then, you know, I think it was a lot of commitment to ask and I think a lot of teams found it really challenging and maybe would not always be there because of that. Hum… So… that was a big shift from that. But I think it really builds a community within their school. And I think, what we have heard from teachers and from districts, is that a lot of times for a school for adopt or you know, use a product across their school, its because a group of teachers have started of saying “I’ve been using this product. I really like this product. Hey, like friend over there! Please use this product with me,” and they are like, “Oh! Yeah we like it” and kind of builds momentum that way [uhum]

[Lucas] So yes, so I guess that talks to the implementation phase of the, of the software that they were trialling. Hum… could you tell of us of a, a specific hum, aaaaa, ww… what do you call this phase after orientation? the pilot? [the pilot] phase. The Pilot Phase. So. Yeah. Could you tell us one story that things went really well or things went really badly?

[Anita] Sure! So, there was a product that as used in the last round where I felt like, it was really… we saw a lot of interesting things happen, hum… but they’re all like lot of qualitative metrics. So it”s called Brain Quake, and actually the CEO and cofounder, he’s the… he actually was an LDT graduate, hum… but… it’s basically this game on an iPad or… whatever, where you can play… you have little keys and you have to line the keys to get this little creature out of a jail essentially, but, what was interesting is when you turn the gears it also kind of teaches an eight number sense, so it’s like, this interesting puzzle that kids kind of enjoy solving. And so he was using this in some classrooms in the Bay Area. Also one in Gilroy and this teacher was a special ed teacher. And so she was kind of showing them this and so… What I think was really, really successful that I found was that for one of her students, they had trouble with like motors skills. And so one of the skills that they had trouble with was kind of like turning the gear on the iPad. hum. But the student actually learned to turn the gear like to the left. Cause you can turn it both ways and they were able to like, learn that skill moving like, doing a different motor skill than they had before because they really wanted to play the game. And so I thought that was like a really wonderful example of how technology can be really inspirational or like really helpful versus I think their other, you know. Well, lots of examples in literature where technology just like, you know, it’s just a replacement for something. Hum… and so… I think also a lot of the other teachers who worked with that product really, their students really enjoyed it, ‘cause it is really engaging and they were making like, connections between the fact that, you know, I’m doing math and they could see h… they could understand that, you know, if I redid this into an algebraic expression… like they were coming up with that terminology and then they were like “we could just rewrite this into an algebraic expression”. And I think that was a like a really wonderful example of a product that went really well.

[Lucas] Did that product end up being hum, adopted or implemented in the school [Yeah, so…] effectively?

[Anita] That just happened this spring and I don’t think it has been yet. Hum… they’re still also like an early, you know like an early stage company so they’re, I think they’re still growing and figuring out exactly what it looks like. But I think that we are trying to support companies in that way. And we’re still figuring that out. So…

[Lucas] And was there ever hum… a big problem in a pilot?

[Anita] Yeah, let me think… typically I would say the problems that we run into in a pilot is where, companies are like working with their teachers and it’s going well but then sometimes companies get really… I guess it depends, now that I think about it. In the fall of last year the was one where the company like, the developers got really busy cause they’re just, start-up just took off. And so they became pretty unresponsive with our teachers. The teachers like, emailing me, and I’m like trying to get in contact with it, and so typically when there’s not communication between these parties, it would… the pilot would not be as successful as it could because they weren’t communicating. Things weren’t changing. Hum… In the spring, one of the things… The biggest challenge we found was actually testing. So testing was happening for the first time for Common Core and so what would happen was these teachers that email me, being like “I can’t get a hold of the Chrome Book carts”. Like, they just couldn’t get access to the technology they needed to run their pilot. And so… one teacher… her district told her this before she like committed to the pilot. And she just like pulled out. Like she’s like “I just can’t do this” like “I don’t have access to these, to like, the technology that I need”. Hum… But some other teachers, they were like, one of them told me she had to like had to go to the principle and like beg to use the Chromebooks on a like… on a day that they weren’t being used, but, I think because it wa the first year of testing, a lot of schools and a lot of districts were very, hum, protective of their technology cause they just wanted to make sure it went smoothly. And that totally makes sense. And so… for… because the… the testing when it… kind of… varied like when this would happen for the different schools but, some schools were more extreme in like saying, you know, were just gonna use it for all this quarter… like we… like, you know, we’re gonna lock it up and then others were like “Well… we’re not testing now so feel free to use it” So… That was a big challenge in our pilot this spring.

41:55

Interview 2

[James] So, do you, do you se-, do you see other people sort of coming in and filling that spot? Um bes- I mean, iHub, right?

[Anita] Yeah?

[James] Um, has anyone else tried to do that or…?

[Anita] Yeah, actually, that’s a good question. That makes me think of something else. Uh, the county sometimes does it.

[James] Mhm.

[Anita] So especially in California where there’s small districts, uh, there’s a district in Santa Clara County that has like two schools.

[James] Mhm?

[Anita] There’s a couple districts in San Mateo County that have three schools, and so this is like, you know two elementary schools, one middle school? They, the county, can be assisting in kind of helping develop tech plans. Maybe not per se rolling out of… it may not be rolling out of specific technology but they kind of help support infrastructure. They may also help with, let’s say if three districts in a county want to purchase a certain product, and they’re really small districts, so like, so their total makes like eight schools?

[James] (laughs)

[Anita] Right? The county can kind of help facilitate a purchasing plan with the other schools, so that way prices are more fair for the, that, those schools.

[James] Yeah.

[Anita] So I guess the county does sometimes play a role, but it depends on the county. It depends on the county leadership too.

[James] Mhm, and have you noticed um, how well they do?

[Anita] So, I think San Mateo is one of the counties that does well in this. Uh, I know that they’ve had some, they’ve definitely assessed their schools in San Mateo County two years ago for tech and how in-, the infrastructure is. I actually have a website that I can share with you about that. But it tells you like the ratio of how many like IT personnel there is to students, like but it doesn’t say anything about software. I think it’s simply in tech- tech adoption, it’s simply like the infrastructural side, not like software.

[James] Yeah.

[Anita] But that’s important, right?

[James] Yeah.

[Anita] You can’t have that without, you can’t have software without hardware, so, you know.

[James] Yeah, no, that is very true. Um, and so in, in cases like, like San Mateo, um what do you think iHub like sort of adds to the mix then?

[Anita] Yeah, so, I think because San Mateo County isn’t per se te-testing software,

[James] Mhm.

[Anita] Our goal is really to help support software implementation.

[James] Mm.

[Anita] And seeing, you know, what works in software, what works in edtech that way, um, I think they’ve done a lot of the, the other research. And also, I think it’s changed a lot. Like two years in the tech, edtech world is a long time.

[James] Yeah.

[Anita] Like two years ago, it was, it, the landscape looked different, like Khan Academy like different. Some of these startups don’t exist, right? So, or maybe they did and they folded.

[James] (laughs)

[Anita] Like there was something, Amplify, I think?

[James] Oh,

[Anita] So, =

[James] Joel Klein.

[Anita] = So, so I think there’s a lot of like change in the world? So, I think that’s a big, I think you have to re-, continually assess in order to in order to have like a better read. So I think, I have, helps in, it can help in supporting the county. We’re trying to create like a systematic way to like do that, I guess, is assess kind of the edtech side infrastructure but also create a model so piloting of edtech, especially new edtech, is easier, and then there’s a route that’s more clear-

[James] Mm.

[Anita] -to the question for what works and what doesn’t.

[James] So, sort of, um, so would you say, so just to repeat, sort of like to rehash-

[Anita] Mhm?

[James] -what you’re saying, it’s, you’re sort of setting like this, um, like the front runner, right? You’re setting like this sort of example?

[Anita] That’s the goal. I think is to some sort of model that you can follow, like implement, like a flow chart almost.

[James] Mhm.

[Anita] But we know that school districts are different, so there probably will be some choices or wiggle room in some of these decisions. But I think that’s the goal.

[James] Mhm. And, um, and you may have already touched on this. So what do you think is the, (pause) what DO you think is going to be like the iHub sort of like place in the world?

[Anita] I think the research part is really important. So I think school districts can always fund a lot of the research, and I think if we, we now have a process for matching and school support. But, I think the research cycle really brings it all together, so if we are able to create a strong research process-

[James] Mhm?

[Anita] -uh, then it will be able to, schools will be able to kind of use the research process and say like, “This works, we should use this. This doesn’t work. This is why.” Give them feedback, hopefully they’ll change, the companies will change.

[James] Mhm.

[Anita] And then, move forward.

[James] Yeah. So how, how do you see that sort of unfolding?

[Anita] How do what?

[James] (in a clearer voice) How do you see that unfolding?

[Anita] Yeah, that’s a great question.

[James] (laughs)

[Anita] That research side is always the side that EVERYONE in this field struggles with. Um.

[James] Mhm?

[Anita] I think right now, there’s more and more literature on it, so we kind of start from there. We also work with different partners, so we’re kind of thinking about, uh, I know another group is doing design-based implementation research, so DBIR research. Uh, but it’s kind of the goal that everyone in the group—so the teachers, the adaptive helpers, the students—everyone plays a role in kind of designing, kind of giving feedback. It’s like implemented in the classroom, but they kind of altogether give feedback so that, over time, the product gets better.

[James] Mhm.

[Anita] Um, but in the world of research, (3) I think we’re kind of, WE are kind of on the exploratory research side slash design slash implementation side, so we’re like earlier. And so I think, we’re, we’re still learning a lot about the field-

[James] (laughs)

[Anita] -about what that looks like. So we have things in place, but I think we’re trying to make them more robust.

[James] Mhm. (in a softer voice) Very cool. (in a louder voice) Um, and so, we went rogue for a little while there. Uh, so, let me backtrack a little bit. What do you think, what do you think is sort of the ideal relationship between um, edtech company and school or educator?

[Anita] Hm, that’s an interesting question. I mean in my head an edtech vendor is a provider, right? So they should be providing some service that fits a need that a school has or a teacher has or a student has in some way.

[James] Mhm.

[Anita] So that’s how I imagine the relationship is, is that they’re providing something to the student. But at the same time, obviously, that providing something, it, it’s a ben-, there’s some benefit to the student, or teacher, or classroom that it brings (…) efficiency? Right? It could be classroom efficiency. It could also be like differentiating or like being able to adapt to each person where they’re at in the classroom. Um, but I think there has to be some sort of benefit to it.

[James] Mhm.

[Anita] Yeah.

[James] So that’s from the um, that’s from the edtech company to the educator.

[Anita] Yeah.

[James] And what about vice versa?

[Anita] I think in my head, it brings I think it helps, I think it just, I think they’re able to give feedback? I don’t know, I never thought about that, it, as much that way. But I imagine that if a product is doing well, then it also provides, like over time, it’ll provide feedback, and that product will continue to get better, and it will continue also to grow in usage around the area.

[James] Mhm.

[Anita] Where, not necessarily around this regional area, but in the area that it’s being used.

[James] Mhm. Um, and would you say sort of, I mean, so iHub, your, your core mission, right, um is to, your value proposition was to, you know, sort of facilitate this interaction=

[Anita] Mhm?

[James] = Uh, do you think you, how, how would you want to like, I guess, how would you want to facilitate that ideal relationship, um?

[Anita] Yeah, so I think there are ways that we’re still working on to figure out exactly what that looks like, especially thinking like five years in the future?

[James] Uh huh?

[Anita] But for now, I think no one kind of facilitates these relationships so we take the place to do that.

[James] Mhm.

[Anita] Uh I think in like ten years, ideally, we wouldn’t have to do that because schools and districts would be doing that internally, right.

[James] Yeah.

[Anita] They would be able to set aside part of their budget to pilot products, not to pay the products, but maybe to pay the teachers and, or, maybe they don’t even, like, it’s part of the integral process of how you’re teaching so it’s related to the professional learning that happens.

[James] Mhm.

[Anita] In school. Um, and then they would use data collected from these pilots as decision points for whether or not to purchase the product, and then if they don’t purchase the product, or even if they do, kind of give that feedback to companies so that companies can change their product to be more appropriate for the education world.

[James] Mm. Can you elaborate a little bit more on that, actually? It’s uh…

[Anita] Yeah, so I think we just want to make sure the, the products are really relevant to students, right.

[James] Mm.

[Anita] And so, that’s the way we do it, is that you get feedback from students and teachers, but I think those needs change, right? Each year, this year, the needs are different than last year, because this year you’re using Common Core, and last year, maybe, it wasn’t as big of a, actually it was really big last year.

[James] (laughs)

[Anita] Maybe two years ago wasn’t the same, right?

[James] Mhm?

[Anita] So I think that that’s a big…

[James] Big?

[Anita] Difference. Yeah.

[James] Yeah.

[Anita] So.

[James] Um, and is there other things that you, is there um, anything you would either do, well, what would you want to keep the same or would you want to do differently, or would you want to sort of sustain? Do more of?

[Anita] Yeah, I think there’s a lot of things that are good right now for matching process. I think that it’s really helpful that we have lots of connections to districts, so I think that we need to continue to maintain those relationships, but also continue to grow them.

[James] Mhm?

[Anita] Uh, I think starting with the problem of practice. So having teachers kind of come in with the need they want a product to use to fill-

[James] Mhm.

[Anita] -um, is important. But I think maybe something to change on that front is also how you help them define that problem of practice, because I think some teachers come in and say like, “We really want differentiation lists in math in third grade.” But then when they finally see the companies that are selected, they’re like, “Oh wait, we really want to do something else.” And it’s like, was that really a need of yours? Or were you just kind of saying that because it sounds like a need that everyone’s talking about?

[James] Yeah.

[Anita] Um, and so I think helping teachers really focus on a problem of practice, that’s something that we’re learning to work on, but (clears throat) this year at least, it was at least stated, versus in the past, it wasn’t even stated at all.

[James] (laughs)

[Anita] So continuing to going, going down that path is really important-

[James] Mhm.

[Anita] -in the matching process slash vetting process. Um, I think something that has been good especially in the Bay Area is working with early stage companies =

[James] Mm.

[Anita] =and so we work with early stage companies to, you know, it, it’s a good place to be for that. So I think for us, that’s a really good niche.

[James] Mhm.

[Anita] Um, but I do think as time goes on, something that needs to kind of change in the work is that we have to support both early stage companies but also like mid, like later stage companies, so that you know, teachers change their practice or you know, like, it, is it really affecting students if it’s in ten classrooms, right? Not really.

[James] (laughs)

[Anita] So like, I mean, it does, but, you know. It could have a wider, wider effect if there are more, if there are more, it was in more, if it’d shown that it actually should be in more.

[James] Mhm.

[Anita] So. Other things, I think research similarly like, we have some protocol, some usability research, but I think it would nice to step into a little, especially for later stage companies, how do you help with maybe, more specifically efficacy research? Which is how well or how well this product’s meeting a need that it said it’s meeting, that it said it’s trying to fix.

[James] Mhm.

[Anita] So, (in a very soft voice) that’s one, (in a slightly louder voice) one thing I guess.

[James] Yeah.

[Anita] Mhm.

[James] So that, this is actually quite interesting the um, I guess for me, the, the idea of you know, early versus late stage, right?

[Anita] Mhm?

[James] Um you, you brought up that that’s sort of, you see that as your, as your niche, right? Is the early stage.

[Anita] Yeah.

[James] Um and I can, I can guess as to why, but can you, can you tell me a little bit more?

[Anita] I think one of the big challenges is, in edtech, it’s like there’s so many edtech companies so it’s how do you kind of bring to the surface the ones that are promising? So, I think our goal in vetting the companies is to bring to the surface some of the more promising early, like, edtech companies and kind of help them go from early to mid. I think there’s a big jump from those two and some people don’t (laughs) don’t make it.

[James] (laughs)

[Anita] Actually a lot of people don’t make it. =

[James] (while laughing) A lot of people don’t make it.

[Anita] =Yeah, a lot of people don’t make it.

[James] (laughs)

[Anita] Most. So.

[James] (while laughing) Yeah.

[Anita] I think that’s the goal.

[James] Yeah.

[Anita] Yeah.

[James] And, and so when you, when you call your, your niche, that sort of implies like a competitive advantage, right? Um for iHub=

[Anita] Mhm.

[James] =specifically? Um and so, um yeah, I mean, yeah. Could you elaborate more about this, that, that idea?

[Anita] Yeah, and I think, well I think that’s mostly because right now, schools DO need edtech products. Like they des-, they want it. They want products that do x, y, z. Um but they don’t really know how to go about and find them. So I think that that’s why we’re working with early stage companies because I think it’s, it’s possible to find one now that meets the needs of many teachers and kind of help it kind of just move along.

[James] Yeah.

[Anita] And it’s quote unquote adoption learning. Which, I use that word. I don’t really love=

[James] (laughs)

[Anita] =the word “adoption.” I think it has a lot of loaded meanings, but.

[James] Um (laughs).

[Anita] Yeah.

[James] And, and wh- why, why do you think iHub is uniquely sort of in the position to do, you know, to like really understand that?

[Anita] Mhm? I think it helps that with a lot of partnerships we’ve previously formed, I also think that since we’re neutral, we’re not a school, we’re not an edtech company. I think that that puts us in a position to facilitate those relationships well.

[James] Mhm.

[Anita] Uh I think if we were a school, then we’d be constantly thinking about like, “how much does it cost?” Like, uh other things, that I feel like schools HAVE to think about.

[James] Yeah.

[Anita] Which I mean, are very important. We also keep those in our head when we’re recruiting, but I think it also gives us some neutrality, I think, as well, so. And with, the other side is that we’re not really affiliated with edtech venture funds, or like incubators, right. We have partnerships with them, but we’re not like soliciting. Or we’re not trying to make a sale, so school districts are more willing to work with us because we’re not like, “You have to use this product because we’re going to like make money from the fact that you use this product.” =

[James] Mhm.

[Anita] =It’s just like, “Oh, from the tests that WE did, and the research that we, research that we’ve done with other teachers, they really enjoy this product specifically for these things.” So, yeah.

[James] Um, and do you think, do you think, or how hard or easy or whatever do you think would be for, not a competitor, but like another sort of um, iHub model to come up and sort of, you know, also add to, add to the ecosystem?

[Anita] Yeah, so, the compa-, the other groups that we work with kind of do similar tests they run. We call them test beds. They have similar test beds. But the three that have been funded so far, we focus on early stage, I would say, iZone kind of focuses on design implementation research, and then Leap focuses on impact or efficacy research-

[James] Mhm.

[Anita] -so we kind of do have similar people in the space, but not here in the Bay Area.

[James] Yeah.

[Anita] I do think there are more and more coming up. I think (3) it would, I mean, it’s good to have more people doing research about this because no one knows how to do it well.

[James] Mhm.

[Anita] So, I think that would be a good, it would be good in some ways, obviously. And then, obviously, for, in other ways it would be more competitive for us.

36:54:04

[Ana] We’ve been talking a lot about the opportunities for educators to give feedback back [yeah] to the startups to improve their products. Are there any opportunities for the entrepreneurs and the educators that are involved in this partnership to give feedback back to SVEF?

[Anita] Oh yeah. There are. I never mentioned those, but there are lots. Every time we have meetings, we are very…open about that. And I also think, we have surveys, so there’s a lot of, we send out a lot of surveys about a lot of…different, specific, different…happenings, and so after…orientation happened, there was a survey that was sent out about that. After the Pitch Games happened, there was a survey about that…I also think that during the rounds if we have strong relationships with teachers, which is typically the case, then teachers are very open with us. At the end, I’ll be like, you know, we’d love to hear your feedback, and they’ll just tell us, you know, we’d love it if there was this, this, this, this. And that’s been helpful, and we’ve made a lot of those changes based on teacher feedback. Like for example, the reason why teacher teams are at a school site this year instead of from all different schools is partly ’cause it makes sense, I think, to scale, but also because we that was one of the big pieces of feedback that was given from the beginning. So.

38:15:00

[Ana] Great, that’s awesome. Um, another question that comes to mind is, you mentioned that, uh, you’re working with earlier stage companies as opposed to [yeah] later stage companies or startups. Um, when you think about how that impacts, or how that affects the actual impact of a product in a classroom (two-second pause), what comes to mind?

[Anita] I don’t know if I understand=

[Ana] Yeah, let me totally rephrase that. (observer sneezes) Bless you. Do you think that working with earlier stage startups, as opposed to later stage startups, impacts the, or affects the impact that a company can have on learning in a classroom?

39:10:08

[Anita] Uh. Could. I mean, it depends right ’cause if (pauses for three seconds) yeah and no, I mean, it depends…if your thinking about it like with time or if you’re thinking about it like short term or long term I guess. So one of the organizations that we work, a different (inaud) does this work with mature companies. And so what they do is they work with schools who have already kind of been using specific products in the classroom, and then they do very specific research using…data points and observation and kind of tells these schools…yes, this products works or…no, we don’t really think this product works…And I think that’s important for schools to know whether or not they’re paying for something that doesn’t bring any learner outcomes, right…or isn’t helping their teachers…adjust to 21st century learning or you know, just changing the way they teach…I think for us the goal is that, you know, we kind of help with this, this market where it’s a little, it’s not very defined…no one is really guiding these people. So they just come up with an idea, and they just kind of throw it out. And if it works, that’s great, and if it doesn’t, then not. But I think we’re kind of hoping to pull out some of those that work. But I think ours, the goal would be like it’s a longer term. You would find out over long term if it works versus something that’s more like yes that works or no that doesn’t work right away. So, yeah.

[Ana] Do you think there are any negative repercussions of trying out products that are so early stage on real learners?

[Anita] Yeah, good question. I think that it’s definitely a possibility. I would say that, I would say that I think teachers who we pick, we try to pick ones who are very…very experienced with using tech in the classroom and so I think that you, you find that teachers who use tech in the classroom, you…it’s like their instruction is different and so you know, depending on, I think they can make learning happen with almost, with different, different pieces. And so I think that’s one way we kind of counter from it. But it is true. But I think it’s like would you rather have teachers do that without any oversight as to whether or not that works? Or would you rather have them do it with some facilitation as to whether or not it actually, there’s some…you know, conclusion at the end like yes, it works or yes, it doesn’t. I think teachers sometimes already do that in the classroom. So. Yeah.

41:45:01

[Ana] Interesting. (eleven-second pause) I think that it’s interesting that you said that ideally in ten years, an organization like SVEF would be out of business=

[Anita] Well, I would say that the iHub Program. (both nod) Yeah. We do a lot of other shit. But=

[Ana] Yeah.

[Anita] I don’t know if that’s…an organizational goal. I think that would make the most sense, right, ’cause I think, in my head, if you…if you identified a problem and you’re able to solve it…that’s great. (laughs)

[Ana] Yeah. Do you, do you think that’s actually possible?=

[Anita] Going to happen? I don’t know. I think it’s hard to say because I would say I don’t know enough about school districts and about school…counties, offices, to be able to know whether or not they’re functional. There’s a lot of bureaucracy, I think, that comes up when you work with the county and work with…there’s so many different needs and so many different people kind of working on it that sometimes…they can’t, they’re unable to kind of do certain actions because of different reasons, whatever they are. So I don’t know. But that would be like, in an ideal world to be able to give a model to a school district, to any school district and be like if you kind of adopt this to your school…this is a way you could pick technology for your classroom, classrooms, and also give feedback to these developers, and then developers would also have a clear path to entry, which I think is a big issue in the market.